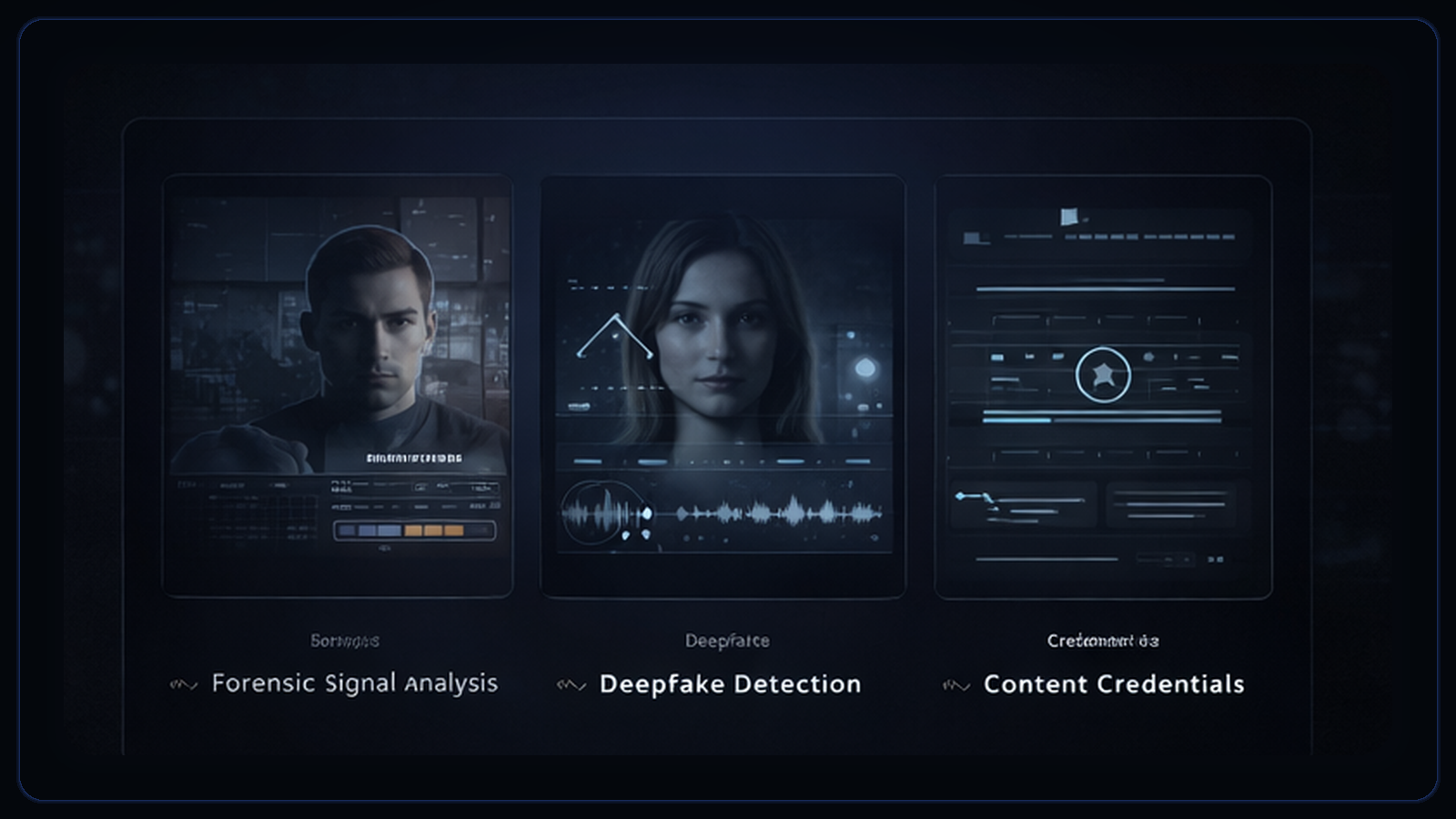

AI Video Detector vs Deepfake Detector vs Content Credentials: What Each One Can and Cannot Prove

These terms are often used interchangeably, but they answer different questions. One inspects the media itself, one may focus on impersonation, and one carries provenance context.

- 01

AI detectors inspect the media itself for multi-signal evidence.

- 02

Deepfake detectors often refer to narrower impersonation-focused systems.

- 03

Content credentials add provenance context when present and preserved.

- 04

None of the three alone can universally prove authenticity.

“AI detector,” “deepfake detector,” and “content credentials” are often treated as if they all do the same job. They do not. The confusion matters because each tool class answers a different question and fails in a different way.

Define the terms clearly

The simplest way to avoid confusion is to keep the underlying question in view: media behavior, impersonation risk, or provenance history.

An AI video detector usually refers to a system that inspects the media itself and estimates whether its patterns resemble generated or manipulated content. DetectVideo falls into this category because it combines visual, temporal, audio, metadata, and provenance signals.

A deepfake detector is often used more narrowly for systems focused on face swaps, reenactment, voice cloning, or other synthetic impersonation techniques. Some products use the term broadly, but the public expectation is often narrower than the label.

Content credentials are provenance metadata. In the C2PA model, they can describe how a file was created or edited, by whom, and what other assets were involved when that information is available and preserved.

A practical comparison

These tools are complementary because they are best at different layers of the authenticity problem.

| Approach | Best at | Cannot prove on its own |

|---|---|---|

| AI video detector | Reviewing the received media for multi-signal evidence of synthesis or manipulation. | That a file is definitively fake or definitively authentic in every case. |

| Deepfake detector | Flagging narrower classes of synthetic impersonation, especially facial or voice-driven manipulations. | That every kind of AI-generated video will be detected, or that a non-flagged clip is genuine. |

| Content credentials | Providing provenance context about origin, edits, and authorship when the metadata is present and intact. | That a file without credentials is fake, or that credentials replace forensic review of the visible media. |

Where each approach helps and where it runs out of road

A strong method in the wrong job still produces confusion, which is why the boundaries matter as much as the strengths.

Multi-signal detection is strongest when you need to assess a suspicious file as it exists now. Provenance is strongest when you can trace origin and edit history through preserved metadata. A narrower deepfake detector can be useful when the threat model is specifically impersonation or face-driven synthetic media.

A detector score does not become proof simply because it is high. A content credential does not become universal proof because it is signed. Each method is bounded by its evidence.

Credentials can be absent for benign reasons. Detectors can be limited by compression, short duration, or missing audio. Narrow deepfake systems can miss manipulations that fall outside the category they were designed to emphasize.

How to use them together without collapsing them into one thing

A mature workflow keeps three questions separate: what the file looks like, what manipulation class may be present, and what origin evidence survived.

- Does the file contain suspicious synthetic patterns?

- Does the file appear to be a specific kind of impersonation or deepfake?

- Does the file carry trustworthy provenance information about its origin?

Provenance answers the third question. Forensic analysis answers the first. Narrower deepfake systems often aim at the second. The workflow breaks when teams treat them as interchangeable.

Sources and standards

Layer the review instead of replacing one method with another

DetectVideo is most effective when used as one layer in a broader authenticity process: multi-signal review for the file itself, provenance checks where available, and human judgment for the final call.

Related articles

Content credentials are provenance metadata, not magic labels. They can strengthen origin claims when present, but they do not replace forensic review.

Detection is hard because the clips people care about most are often short, degraded, reposted, or missing evidence modules entirely.

A defensible workflow preserves the file, separates review stages, records missing evidence, and defines when to escalate instead of guessing.

About this article

Written by DetectVideo Editorial Team.

Technical review by DetectVideo Methodology Review.

Last updated April 3, 2026. Related articles are included for readers who want adjacent context, terminology, and workflow guidance.